Fewer than 1% of HHS’s AI uses are ‘high impact.’ It stands out.

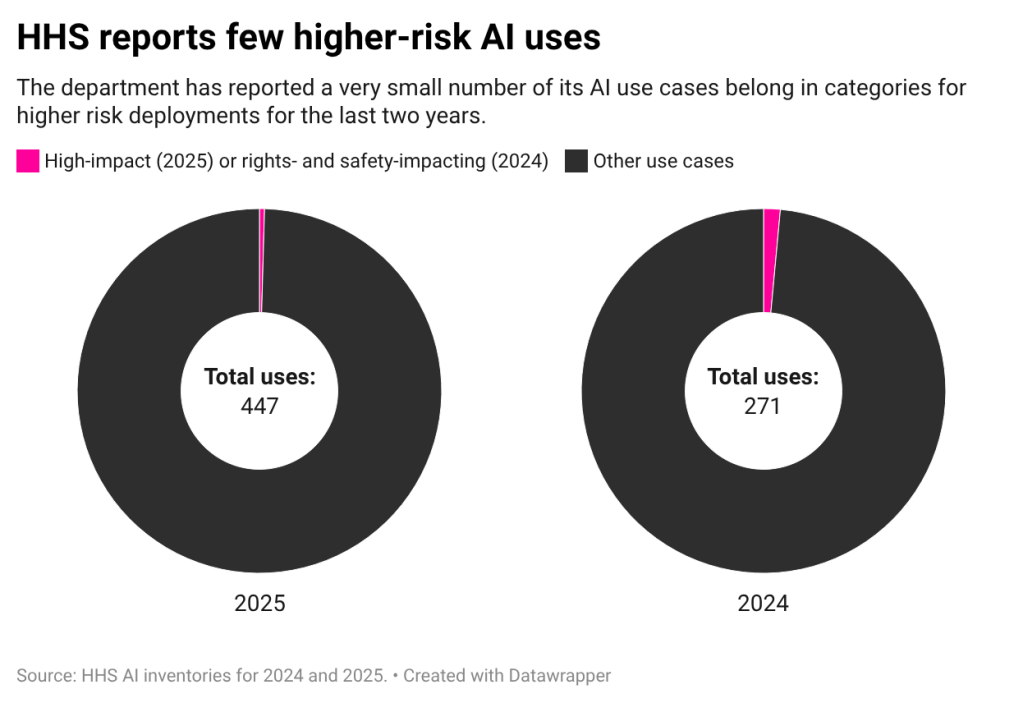

Out of the nearly 450 entries on the Department of Health and Human Services’ most recent AI use case inventory, just two fall into a category that would demand heightened risk management practices, raising questions about the agency’s governance process.

While it’s not clear from the information provided whether HHS is actually misclassifying use cases, the small number of entries classified as “high impact” certainly differs from several other high-use agencies — and comes as the health and safety department reported substantially more uses than in 2024.

What’s more: The few use cases HHS marked for additional risk management on the previous year’s disclosure are now no longer part of that more stringent category. That includes the Office of Refugee Resettlement’s work with Palantir to parse data from U.S. Customs and Border Protection about parents or guardians of children who have been separated from their families.

“When we see an agency like HHS have a pretty significant jump in their overall total number of active use cases while simultaneously seeing no increase in the number of high-impact, I think we should be immediately questioning that,” Quinn Anex-Ries, a senior policy analyst at the Center for Democracy and Technology’s equity in civic technology team, told FedScoop.

Change in risk management for uses like the one at ACF also raises concerns, as there’s an “extremely low” margin of error for those applications, Anex-Ries said. “We’re talking about a use case that impacts some of the most vulnerable members of our society — that’s children who have been separated from their parents,” he said.

As a portion of HHS’s publication, its two high-impact uses are a fraction of 1%. By comparison, high-impact uses make up 23% of the Department of Homeland Security’s inventory, 36% of the Justice Department’s inventory, and 59% of the Department of Veterans Affairs’ inventory in 2025.

To be sure, a couple of other agencies with large inventories also report little to no high-impact uses and a handful of inventories haven’t even included the category in their 2025 postings. NASA reports just one of its 425 uses is high-impact and the Department of Commerce didn’t include the category.

HHS is notable, in part, because of the type of work it does.

Valerie Wirtschafter, a Brookings Institution fellow focused on artificial intelligence and emerging technology, told FedScoop she was surprised not to see more uses within the agency, especially given that the Office of Management and Budget AI guidance presumes applications of the technology that could have a significant effect on “human health and safety” are high impact.

They essentially have “a category in high-impact built for them,” Wirtschafter said.

HHS has not engaged with multiple requests from FedScoop to respond to questions about its inventory. That includes detailed lists of questions about the high-impact process submitted to department press inquiry forms and emailed to spokespeople. Palantir also did not respond to a request for comment on its awareness of the changed risk management approach.

Changing terms

The high-impact designation for AI uses was first outlined in the Trump administration’s April 2025 memo to agencies on AI governance. It came as an evolution of labels created under former President Joe Biden to identify and manage deployments that pose potential risks. Those were known as rights- and safety-impacting uses.

While the labels from each administration have some differences, they share a lot in common. The definitions are relatively similar, turning on whether a use serves as “a principal basis” for agency decisions and actions that significantly affect an individual’s rights or safety. President Donald Trump’s guidance mostly streamlines the labels into one category.

Both administrations defined uses that are “presumed” to impact the rights- and safety of the public, prescribed heightened risk management steps for any that fit the bill, and set a date to sunset tools that didn’t meet all of the requirements. For Trump, that deadline is coming up in early April.

The types of deployments presumed to need heightened risk management include critical infrastructure uses, medical devices, and biometric identification. Under both administrations, agency officials have had discretion to determine whether a presumed use case does not actually meet the definition, but they must track those decisions.

If a use case is determined to need additional risk management, agencies must conduct pre-deployment testing, complete an AI impact assessment, monitor performance and potential adverse impacts, ensure human users are trained, and ensure people who are impacted by decisions stemming from that tool “have access to a timely human review and a chance to appeal any negative impacts, when appropriate.”

In 2024, roughly 16% of use cases across the federal government were rights- or safety-impacting, with DHS, DOJ and VA again leading the pack. HHS had reported a small number then, too. Despite having the highest number of use cases for any agency last year, HHS’s disclosure had only four that fell under the increased risk management labels. None of those entries are listed as high-impact on the department’s inventory.

Loss of risk management

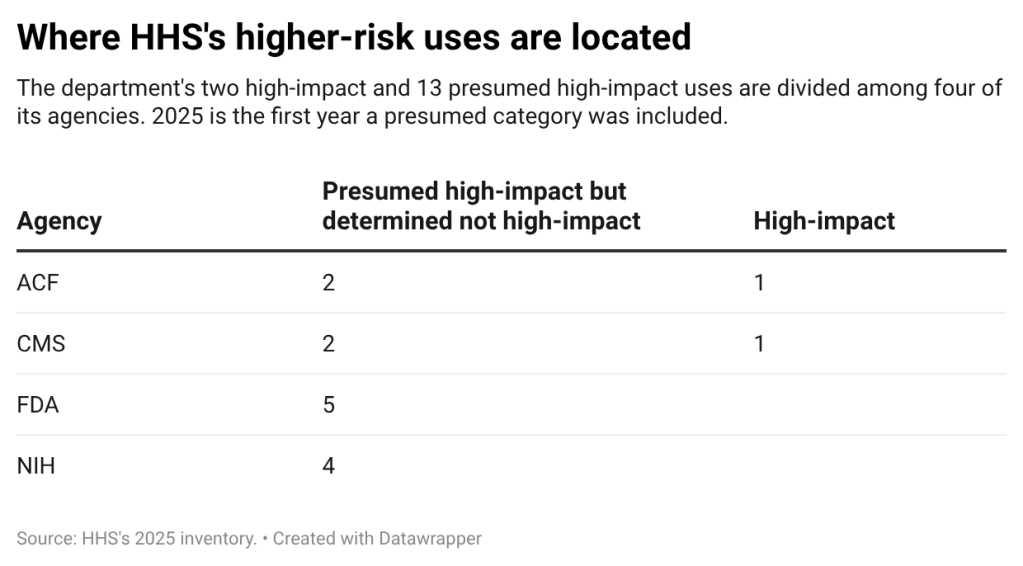

Of the four formerly rights- and safety-impacting uses, two were at Health Resources and Services Administration and two were at the Administration for Children and Families. It’s unclear whether one of the ACF use cases is still on the 2025 posting. However, the other three carried over and are not marked high impact.

Among them is the Palantir system being used by ACF’s Office of Refugee Resettlement. The entry is titled “Structuring and Validating Completeness of Case Data Information” and is an agentic AI system used to help the agency more quickly review information about a minor’s parents and the reason for separation.

According to the inventory, when a minor is separated from their parents or legal guardian, CBP “is required to send certain pieces of information about the parent/legal guardian.” That process has been in place since November 2024 and is in accordance with a legal settlement known as Ms. L. v. ICE. That settlement limited the circumstances under which parents and legal guardians could be separated from their children.

That information is sent to ORR “in a block of free text” that sometimes doesn’t include required information and the office, historically, has tracked that information “manually and inconsistently.” The tool, called ACF Horizons, is described as providing “more easily searchable and accurate data on family separations” and “quicker validation of CBP compliance in essential data sharing for separated families, enabling faster follow-up as needed to receive any missing data points.”

Despite being listed as “safety-impacting” last year, the new inventory states that it was presumed high impact but determined not to be. The reason provided by the agency is that it’s “narrowly focused on extracting key data points from scanned notices” and the outputs don’t “serve as a principal basis for decisions or actions with legal, material, binding, or significant effect” of the categories in OMB’s memo.

The other previously safety-impacting ACF use case was for triaging ORR’s backlog of “notice of concern” forms, which are used to convey information about the safety of children who have been released from the agency’s care. That use case is very similar to one listed on the 2025 inventory, though it does not have the same title.

On the 2025 use case inventory, the entry is titled “Structuring Notice of Concern Data” and states that AI will be used to extract data points from those narratives and review the completeness of those forms. According to the inventory, ORR received hundreds of the forms each day. It cites a personnel shortage in the team that reviews those documents — the Prevention of Child Abuse and Neglect Team — as a reason for the backlog.

The 2024 use case, titled “Triaging Notice of Concern Submissions,” similarly was used to review those forms and address the backlog — but also stated that the AI tool would be used to provide recommendations on prioritization of those documents. The new entry does not reference prioritization.

ACF states that while the use is presumed high-impact, it was determined not to be. Similarly to the use case for parsing parental and legal guardian data, the agency said the use is “narrowly focused on extracting key data points from unstructured narratives and validating data completeness,” and the outputs aren’t a principal basis for a decision as defined under the memo.

Meanwhile, at HRSA, the two use cases not listed as high impact in 2025 are aimed at streamlining the process of reviewing applications for nursing scholarships. Both uses are not even listed as having been presumed to be high impact and are both still pre-deployment.

One of those entries, titled “Scholarship Insight,” would deploy generative AI to review essays submitted for the National Health Service Corps and Nurse Corps Scholarship. That AI would be trained to review those essays and “do the first cut at scoring.”

The other entry, titled “Site Application Analysis,” would be used to review documents that determine the eligibility of sites to employ members of NHSC and Nurse Corps — as well as the Substance Use Disorder Treatment and Recovery Loan Repayment Program.

Lack of accountability

The only two high-impact tools that do appear on HHS’s inventory are both in pre-deployment phases and have few additional details, such as the vendor or system name.

ACF, for example, has an entry titled “Unaccompanied Child Sponsor Identity Verification.” That use case is intended to vet adults applying to sponsor or care for a child currently under the care of the Office of Refugee Resettlement, per the inventory. While it will use “computer vision,” the outputs were simply described as “confirmation that person A is person A at all touchpoints of the sponsor vetting process.”

The Centers for Medicare and Medicaid Services also reported a test of a system called iVeri-Fi labeled as high impact. That entry states that the government contractor Secro already began using the tool in 2024 and that the tool is already used as part of the agency’s “Eligibility Workers Support System (EWSS).” It states that the tool will “make the adjudication decision using Remote Identity Proofing (RIDP) business rules.” It is not clear what “adjudication decision” it is referring to.

A total of 13 additional entries were presumed high-impact but determined not to be.

Advocates and researchers reviewing the inventories were deeply skeptical of the department’s small showing and pointed to a need for additional information and explanation to hold agencies like HHS accountable for their uses.

Cody Venzke, a senior policy counsel in the ACLU’s National Political Advocacy Department who is focused on surveillance, privacy, and technology, pointed to longstanding concerns with agencies’ ability to make exceptions in their publications.

“Agencies are not providing a full description of their analyses, releasing an explanation of how they’re taking advantage of particular exceptions, and that raises significant concerns that we can’t double check at the agency,” he said.

Anex-Ries, from CDT, similarly questioned the sincerity of the reasons agencies provided for uses not meeting the high-impact bar, particularly when those answers aren’t detailed.

HHS — similarly to the Department of Homeland Security — appears to be using the definition’s use of “a principal basis” for decisions as a workaround to get out of classifying presumed high-impact cases as high impact, Anex-Ries said.

“I think this should definitely raise eyebrows about whether this is a faithful reading of the definition or an opportunistic reading of the definition to avoid safeguards on the part of the agency, and really what it comes down to is that the justification we get is only two sentences long,” Anex-Ries said.

Ultimately, the category and minimum risk management practices are meant to prevent negative impacts of the technology. As the Trump administration’s own memo describes it, the category is a method to ensure that AI is serving the public.

“Every day, the Federal Government takes action and makes decisions that have consequential impacts on the public,” Trump’s OMB memo said. “If AI is used to perform such action, agencies must deploy trustworthy AI, ensuring that rapid AI innovation is not achieved at the expense of the American people or any violations of their trust.”