HHS’s reported AI uses soar, including pilots to address staff ‘shortage’

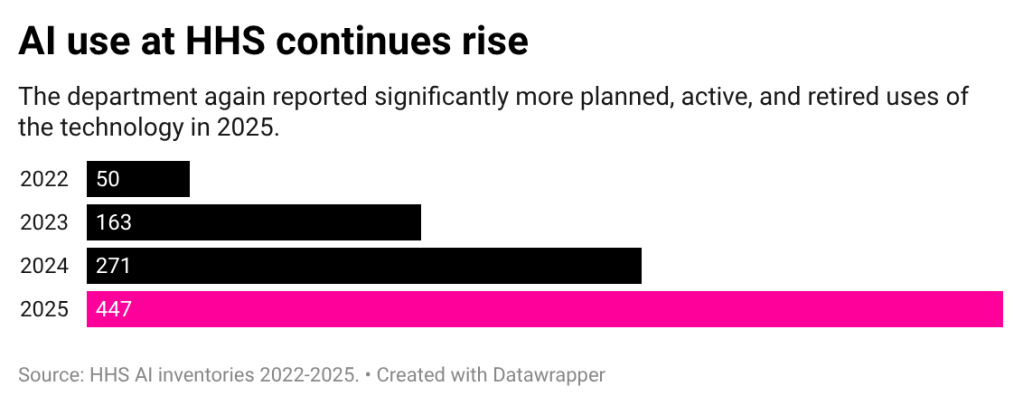

Reported uses of AI increased by 65% at the Department of Health and Human Services in 2025, according to the agency’s latest inventory, indicating even more widespread use of the technology as leadership simultaneously moved to reduce the workforce.

New use cases included pilots aimed at alleviating staffing shortages, multiple disclosures of so-called “agentic” tools, and an additional deployment for data related to the department’s work with unaccompanied children. Over half of the uses for 2025 are in pre-deployment or pilot phases, which means most of the applications are just getting started.

Valerie Wirtschafter, a Brookings Institution fellow focused on artificial intelligence and emerging technology, said her read on the inventory is that “there’s been a huge focus” on expansion at the agency. And HHS was already among the agencies with larger use case inventories in years past.

They’re “leaning into it,” Wirtschafter said.

The uptick in uses at HHS follows years of dramatic jumps in the health and human services agency’s reported uses of the technology. In 2023, the department’s inventory more than tripled from the previous year, and in 2024, it reported a 66% surge. The latest increase, of course, is set to the backdrop of significant change at the department and an aim by HHS Secretary Robert F. Kennedy Jr. to do “a lot more with less.”

Over the past year, the department moved to restructure bureaus and fire thousands of employees, in line with the Trump administration’s calls for agencies to reduce their footprint. At least two use cases specifically address a limited workforce as a reason for the tool.

The Office for Civil Rights disclosed pilots using both ChatGPT and Outlook CoPilot to address the problem of “staffing shortages.” ChatGPT is used for efficiency in investigations “to break down complex legal concepts in plain language and identify patterns in court [rulings] impacting Medicaid services.” Meanwhile, CoPilot is used via Westlaw for “faster correspondence with public.”

According to the inventory, both of those uses were for “law enforcement.” Neither entry included details about how specifically the generative tools were addressing workforce shortages.

HHS and OCR did not respond to FedScoop’s requests for comment to further explain some of the uses and provide additional details not included in the publication. FedScoop submitted a request through HHS’s email form and followed up with spokespeople directly. FedScoop also submitted an inquiry through OCR’s own email form.

While it’s hard to tell exactly what staffing issues OCR might be addressing and how, some generally cautioned against any uses meant to replace — rather than assist — workers.

“AI can be an important tool in any number of professions, and it can certainly have the potential to make government better and more efficient for citizens and all people,” said Cody Venzke, a senior policy counsel in the ACLU’s National Political Advocacy Department who is focused on surveillance, privacy, and technology.

That said, Venzke added: “It is not a stand in for all human decision making, and that is especially true when you are committed to breakneck downsizing of the federal government.”

Wirtschafter similarly noted that the need for uses aimed at addressing staffing shortages “seems to be a little bit of a problem, maybe of their own making.”

But Wirtschafter applauded the fact that the inventory is what provides the public with transparency into interesting uses like those at OCR. “Part of the fact that they have provided this transparency is that you can see, sort of, the problems that are attempting to be solved,” she said.

Agentic uses, Palantir, xAI

Among the other notable use cases, the Administration for Children and Families disclosed that it’s planning to use an AI system to verify identities of adults applying to sponsor unaccompanied minors in the care of its Office of Refugee Resettlement.

That use case is currently in the pre-deployment phase but is one of the department’s few “high-impact” entries, which are uses that will need to adhere to additional risk management practices to continue operating. It does not disclose the vendor.

Other tools seemed aligned with the Trump administration’s political goals. ACF disclosed that it had deployed two tools to identify position description and grants that run afoul of the president’s executive orders aimed at erasing diversity, equity, and inclusion from the federal government.

Those use cases both list Palantir as a vendor and cite “increased efficiency of review, with reduced administrative burden on staff” as reasons for the tool. Palantir — which has attracted attention for its work with Immigration and Customs Enforcement during the Trump administration — is a frequently listed vendor in HHS’s inventory, with more than 15 use cases attributed to the tech company. The majority of those use cases are within ACF.

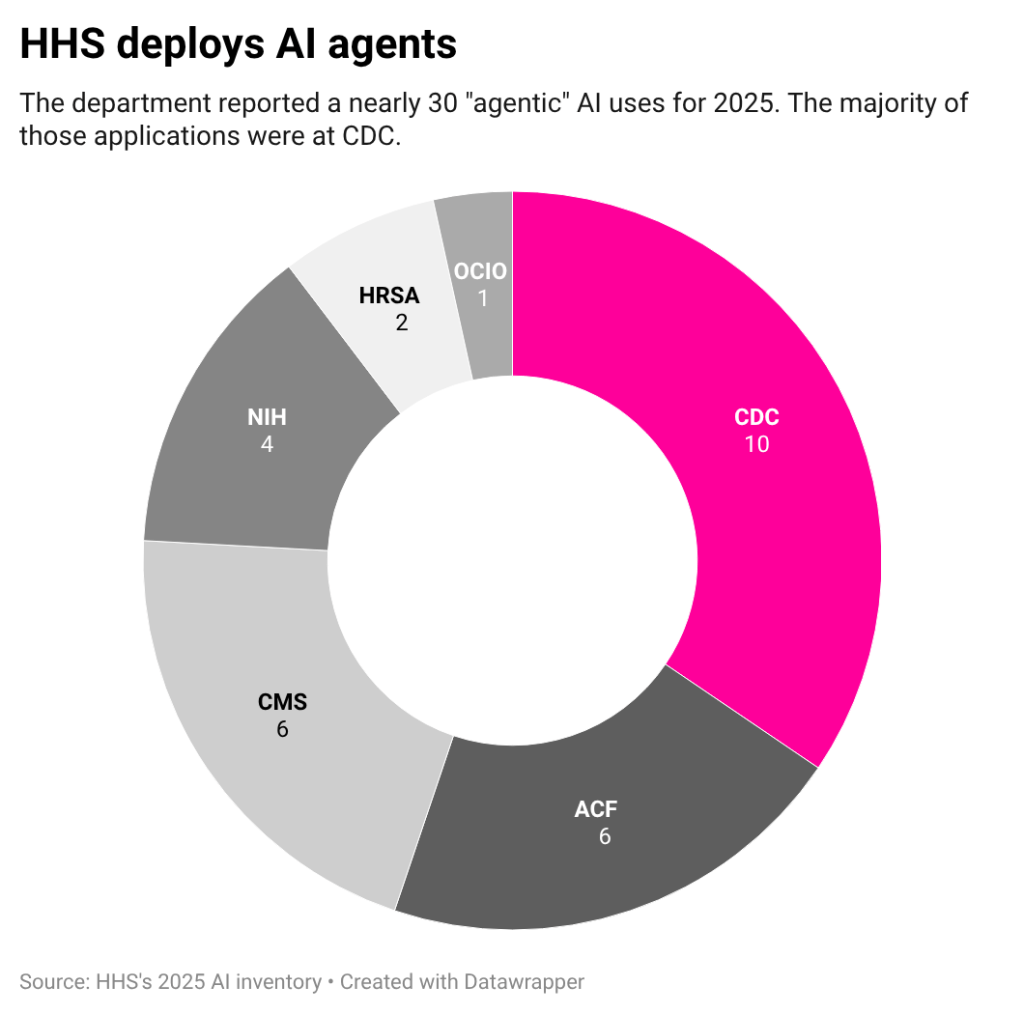

Additionally, HHS lists multiple “agentic” AI uses for 2025.

Agentic AI is a commercially buzzy application of the technology in which specific tasks are performed autonomously or with little human intervention. While those use cases are still just a small portion of HHS’s inventory, last year there were only a handful use cases called AI agents across the entire federal government.

One of those entries is a Centers for Disease Control and Prevention pilot of OpenAI’s Deep Research. According to the disclosure, the tool is being used to help the agency process large volumes of research and data, producing report-style outputs. The entry references an internal study that found “94% of prompts resulted in successful, high-quality reports” and that most of those were completed within 30 minutes.

Other uses included an agent for Freedom of Information Act responses at Health Resources and Services Administration and an internal use case for staff questions about ethics policies at the National Institutes of Health. Both of those were pre-deployment.

Not included in the inventory: HHS’s use of xAI’s Grok as part of its recent RealFood.gov website rollout. The website, which encourages Americans to “eat real food” rather than processed food, is in line with the agency’s new dietary guidance. The page directs users to use AI to answer their health questions and links directly to Grok.

While that use of the Elon Musk-affiliated AI chatbot isn’t on the inventory, the agency did list “xAI gov” as one the services it uses for generating document first drafts and other communications, as well as for scheduling and managing social media posts. Both of those use cases were listed on a separate inventory of common commercial applications of the technology.

Room for improvement

Compared to other inventories, Wirtschafter said HHS’s publication is among the more detailed submitted by agencies for 2025 in terms of information about each use and the problems it aims to fix.

But that doesn’t mean the publication is perfect. The lack of high-impact use cases on HHS’s inventory, for example, has raised questions among advocates and researchers looking into the disclosures.

The new inventory is also difficult to compare year over year. Unlike last year’s inventory or many other inventories published this year, HHS’s new publication does not have unique identifiers for each entry. Those identifiers are generally an alphanumeric code the agency designates for each use, and without them, it’s difficult to identify new tools, particularly if the name or other details have changed.

And blank fields also leave advocates wanting more.

“Reviewing the inventories raises serious questions about the amount of transparency that is actually being provided here, because there are many, many fields that are left blank or simply are noted to be sensitive information, not for public disclosure,” Venzke said.

Specifically, Venzke pointed to the absence of public privacy impact assessments for the overwhelming majority of use cases.

Out of all of the submissions in HHS’s inventory, just one indicated where the privacy impact assessment was located. Despite being on the guidance sent to agencies by the White House Office of Management and Budget, some agencies did not include the category at all. While HHS did include it, it provides little to no information about how the agency has assessed privacy, if at all.

Agencies, by statute, are required to conduct privacy impact assessments (PIAs) for technologies that collect personally identifiable information to ensure those systems are equipped to protect that information. Those assessments are then required to be publicly accessible.

But finding public privacy impact assessments is often difficult, in part because systems can use different names and the assessments can cover multiple systems, Venzke explained. “Even just a link to the PIA provides a lot more surety for holding the government accountable,” he said.

HHS either provided no link, said the PIA was forthcoming, or said the PIA wasn’t publicly available. Venzke said that “defies the entire point of having a PIA, which is public transparency.”

For Quinn Anex-Ries, a senior policy analyst at the Center for Democracy and Technology’s equity in civic technology team, the spike in inventory size prompts questions about what the agency is doing to manage it.

“The thing that’s worth paying attention to most is, what, if anything, the agency is saying or doing to account for the fact that their total AI use has increased by such a significant degree,” Anex-Ries said. “When we look to places like their AI compliance plan and AI strategy, it’s not really clear that they did a bunch of additional groundwork to prepare the agency for their AI use to grow by such a huge margin.”

Interior Department finding momentum with modernization

The Department of Interior is taking a tech-forward approach to its contracting processes, a senior official said last week, part of an overarching agency-wide embrace of modernization.

Speaking at an Atlassian event in Washington, D.C., Interior’s Andrea Brandon said the agency has added three bots — referred to internally as Bob, Bobby and Oz — to its contracting workflows. The RPA tools are designed to ease cumbersome tasks and streamline the process, such as tracking small business goals across categories and modifying token additions, according to Brandon, Interior’s deputy assistant secretary for budget, finance, grants and acquisition.

“We are having a really good time with our bots and naming them,” Brandon said. “It actually really helps engage the workforce.”

In addition to incorporating bots in procurement and contracting processes, Interior is exploring emerging technologies, such as generative AI and blockchain, as well as building off lessons learned from other agencies. The Department of Homeland Security has a well-known procurement innovation lab that DOI has found fruitful for its own endeavors.

“We, as a federal agency, went over to their innovation lab to see what they had going on,” Brandon said. “It was a very good, collaborative experience. We can all benefit from the different things that other federal agencies have going on.”

Interior’s IT roadmap is also based on stakeholder feedback via vendor responses to requests for information, guidance from its executive steering committee and other avenues.

“We take a look at that feedback pretty regularly, and we look for consistent themes across it,” Brandon said. “It helps us build our vision for moving forward, or it helps us tweak some of the curses that we have. … If they tell us the system’s really bad and [we] really need to upgrade the system, that’s positive for us.”

The agency uses that feedback to build stronger cases for technology projects in its budget formulations.

Alignment, miscalculations

As it finds momentum with modernization, DOI is still honing internal alignment.

The agency has faced hiccups along the way. Notably, an audit found that the Interior had misclassified $40 million worth of IT purchases during fiscal years 2022 to 2024, increasing the odds of redundant purchases, breach risks and other vulnerabilities, according to the inspector general report.

When the review was released in September 2025, changes were already underway. Interior was working to centralize its IT infrastructure and compliance functions across the department, as directed by an order from the secretary posted in April.

“We’re actually getting everybody acclimated to being at headquarters level, because that’s a little different than being at the bureau level,” Brandon said of the cultural change facing workers.

Like other agencies, Interior is in the midst of an emerging tech push while feeling the pressure to do more with less, cut costs and unlock efficiencies.

The agency lost more than 6,000 workers last year, and it has seen its workforce reduced by another 7,400 in the first two months of this year, according to OPM’s federal workforce data. Of those, more than 500 held an IT management position.

A number of the 200-plus use cases listed in the agency’s AI inventory are said to help lower costs or lessen manual labor needs. One tool identified by the DOI is being used to inspect and remove blank pages associated with litigation preparation, aiming to lower costs for records storage and removing the need for staff to complete the task. Another use case is said to have “reduced manual labor cost” via AI-powered identification of responsive documents.

Brandon said the agency is “on top of” the qualitative type of measurements needed to track success of investments in IT, but there is room for improvement elsewhere.

“As far as quantitative, like looking for key performance indicators and actually measuring them, I don’t know that anywhere is quite there yet,” Brandon said. “That’s something we need to address.”

House bill seeks AI workforce tax credits, agency-led outreach

A pair of House lawmakers want a new tax credit for artificial intelligence workforce training — and an agency-led push to make sure companies take advantage of it.

The AI Workforce Training Act from Reps. Josh Gottheimer, D-N.J., and Mike Lawler, R-N.Y., would amend the Internal Revenue Code so that companies can claim a tax credit if they offer various AI career development programs.

“AI is already changing how we work and that transformation will keep getting faster, and we can’t let the American worker get left behind,” Gottheimer, co-chair of the House Commission on Artificial Intelligence and the Innovation Economy, said in a press release Wednesday.

“Change is coming,” he continued, “and if we want America to continue to lead the world in AI innovation, we need to make sure American workers are ready for the jobs of the future. This bipartisan bill will help workers build critical AI skills, boost productivity, and strengthen our economy — all while keeping the United States at the front of the pack.”

Per the bill text, companies could claim a tax credit equivalent to 30% of qualified expenses to train employees on how to use, manage and build AI systems. The credit would be capped at $2,500 per employee annually.

Expenses covered by the legislation include accredited courses, certificate programs and workshops. In-house instruction on topics such as AI ethics, data literacy, machine-learning fundamentals and prompt engineering would also be eligible for the tax credit.

To get the word out about the tax credit, the Commerce, Labor and Treasury departments would be tasked with developing and launching a public awareness campaign. The agency-run outreach would include informational webinars, publications, and multilingual materials distributed through small business development centers, trade groups and job boards.

“If quantum computing and AI are the future, our workforce can’t be left behind,” Lawler said in the press release. “This workforce tax credit gives them the training they need to compete for the high-paying tech jobs of tomorrow, right here at home.”

The legislation from Gottheimer and Lawler follows a string of AI workforce-related bills introduced at the end of last year, including a bipartisan, bicameral effort to help agencies recruit and retain AI talent; a push from Senate Democrats to get Commerce, Education and Labor to study AI’s effect on the workforce; and a bipartisan Senate attempt to put DOL at the center of an AI workforce research hub.

FAA, DOD data silos were partly to blame for last year’s DCA crash

Inadequate information-sharing and deficient data practices across the Federal Aviation Administration and Department of Defense were to blame, in part, for the midair collision near Ronald Reagan Washington National Airport last year, according to the National Transportation Safety Board’s final report.

NTSB found that the FAA’s Air Traffic Organization was “made aware of and had multiple opportunities to identify the risk of a midair collision between airplanes and helicopters,” yet insufficient data analysis, safety assurance systems and risk assessment processes “failed to recognize and mitigate.”

While the Army was “unaware” of certain risks tied to DCA due to a nonexistent flight safety data-monitoring program for its helicopters, NTSB also found the Army had a weak safety management system that failed to consistently detect hazards.

“The limited access to and use of available objective and subjective proximity data hindered industry and government stakeholders’ ability to identify hazards and mitigate risk,” NTSB said in its report.

As part of NTSB’s analysis, the watchdog had 50 to 60 staff members on the investigation, who gathered 19,000 pages of evidence, Jennifer Homendy, chairwoman of the NTSB, testified during a Senate hearing Thursday. The collision, ultimately, was preventable, she said.

“Now that our investigation has concluded, I can say without a shadow of a doubt that we’ve seen this before,” Homendy said. “We’ve investigated similar midair collisions going back decades, and we’ve issued safety recommendations, like [Automatic Dependent Surveillance–Broadcast], over and over and over again aimed at preventing these kinds of collisions, recommendations that have been rejected, sidelined or just plain ignored.”

NTSB ran into its own hurdles while trying to gather data from the FAA, Homendy added, pointing to denied access to reports and a poor safety culture within the organization.

“Throughout our investigation, we found numerous people who were afraid to talk to us,” Homendy said. “At our own hearing, I had to get everyone to commit not to retaliate — still, that occured.”

The NTSB recommended the FAA improve its data analysis and share that data with external stakeholders, such as other federal agencies or private-sector airline companies.

“They are not doing what we have recommended, and what we have been urging them to do the entire time, which is not only evaluate their data — which they’re starting to do now — but to develop a simple definition of what a close call is,” Homendy said.

The FAA is facing criticism amid a major overhaul of its air traffic control system, which could end up costing upwards of $30 billion.

“Applying the hard lessons we’ve learned from the DCA accident, the FAA safety team identified controller workload and system demand as emerging risk factors, and as a response to this increased risk, we temporarily reduced operations,” FAA Administrator Bryan Bedford told lawmakers in December. At the time, the senior official said an agentwide safety management system enabling quicker reactions and analysis of incidents was on the priority list.

The DCA collision report also comes mere days after U.S. Customs and Border Protection personnel shot down an object near El Paso, Texas, in what has become the latest signal of interagency friction between the DOD and FAA. In a confusing twist of events, the FAA posted a flight restriction notice for “special security reasons” that ended within hours despite being expected to last until Feb. 21.

“There has been miscommunication, or no communication, between at least the Army and FAA for years,” Homendy said.

In an emailed statement, Army spokesperson Maj. Montrell Russell thanked Homendy and NTSB investigators, and said the “Army remains committed to collaborating closely with the NTSB, FAA, and other federal partners to support lasting improvements in aviation safety that honor those who were lost.”

A Department of Transportation spokesperson said in an emailed statement that the FAA “values and appreciates the NTSB’s expertise and input” and it has “acted immediately to implement urgent safety recommendations it issued in March 2025. ” The agency added that it will “diligently” consider additional recommendations.

In a post to X, Transportation Secretary Sean Duffy positioned the incident as a collaborative effort. “The FAA and DOW acted swiftly to address a cartel drone incursion,” he said.

Despite Duffy’s assertions, skeptics remain.

“The FAA is saying that we’re going to shut down airspace for 10 days, and then another agency is saying something different,” Sen. Maria Cantwell, D-Wash., said during the hearing. “It just seems to me that we have a real problem of coordination between DOD and FAA.”

Codifying NTSB recommendations

In addition to requesting an interagency briefing, lawmakers reaffirmed their desire to pass the Rotorcraft Operations Transparency and Oversight Reform Act during the hearing Thursday.

The Senate unanimously passed the bill in December and it has been in the hands of the House since. Leaders of the Senate Committee on Commerce, Science and Transportation pushed for the ROTOR Act to be included in this year’s appropriations package, but the request was ignored.

“While the ROTOR Act addresses critical safety shortfalls and loopholes that contributed to the DCA mid-air collision, the safety enhancements will apply nationwide,” the senators said in a letter to Majority Leader John Thune of South Dakota, Minority Leader Chuck Schumer of New York and others.

The legislation has garnered support from the Department of Transportation, the FAA, NTSB, and the DOD. The ROTOR Act codifies many of the NTSB recommendations, according to the chairs of the committee.

“I’ve heard some faint grumbling from stakeholders and others who want to put the same kind of loopholes into the ROTOR Act that caused the DCA crash,” Senate Commerce Committee Chairman Ted Cruz, R-Texas, said during the hearing. “Some want exemptions for private jets, while a few airlines quietly carp about the cost of safety enhancing technology. These criticisms aren’t valid, and they are frankly disturbing.”

Homendy said American Airlines spent around $50,000 per plane to retrofit it with the automated, satellite-based broadcasts. Boeing offers the tech on new planes, as well as Airbus and Gulf Stream, she added.

“It is possible; the technology is available,” Homendy said. “We should not have to be here, and we wouldn’t be if the NTSB warnings had been heeded.”

This story was updated Feb. 18 with comments from a DOD spokesperson and Feb. 19 with comments from DOT.

SEC chair considers ‘innovation exemption’ for in-house AI testing

The Securities and Exchange Commission is eyeing artificial intelligence sandboxes for entrepreneurs focused on “investor protection,” the regulator’s chief told lawmakers last week.

During a Senate Banking Committee hearing, Sen. Mike Rounds, R-S.D., asked Chair Paul Atkins if his bipartisan bill to establish enforcement-free AI testing at financial regulatory agencies would “give the SEC the tools it needs to foster responsible AI innovation.”

The Unleashing AI Innovation in Financial Services Act from Sens. Rounds, Martin Heinrich, D-N.M., Thom Tillis, R-N.C., and Andy Kim, D-N.J., would direct the SEC, the Federal Reserve, the Consumer Financial Protection Bureau and other federal financial agencies to create in-house AI innovation labs. The agency-run sandboxes would allow for testing of AI projects “without unnecessary or unduly burdensome regulation or expectation of enforcement actions,” per the bill text.

Atkins told Rounds he hadn’t yet reviewed the legislation, but he’s on board with the bill’s “premise” and has been mulling over a similar idea.

“I’ve been talking about an innovation exemption, to begin at the SEC, to allow entrepreneurs in a sandbox-like environment that’s … cabined, time-limited, transparent, flexible, and then focused on investor protection,” Atkins said. “So all of those principles, I think, are important, and to allow people to try different things in a particular environment and then prove their concept.”

Rounds noted in his questioning that the White House’s AI Action Plan has an explicit callout for the creation of “regulatory sandboxes” at agencies, including the SEC. Those sandboxes would be supported by the Department of Commerce via the National Institute of Standards and Technology’s AI evaluation initiatives, per the strategy document.

“This venue would allow SEC-regulated entities such as broker-dealers, investment advisers to test new AI tools under structured oversight,” Rounds said.

It remains to be seen whether Rounds’ bill moves forward in the Senate, and whether Atkins’ “innovation exemption” idea comes to pass. But the SEC already has some seemingly small-scale sandbox experience, according to its recently released AI use case inventory.

The SEC’s Office of Human Resources has deployed what it calls a “Training Conversation Tool” built by the AI management platform Skillsoft. The tool uses natural language processing to improve “communication and collaboration skills of the SEC workforce” — but is listed as “a training sandbox only.”

Later in the hearing, Atkins was pressed by Sen. Mark Warner on agentic AI and whether the SEC believes banks and broker-dealers have proper guardrails in place to make sure autonomous tools of that kind don’t “commit a malfeasance or something illegal.” The Virginia Democrat added that there’s “bipartisan interest in helping on this.”

Atkins said he shared Warner’s concerns, but “people are still experimenting” with the technology, so it’s probably too early to tell what’s happening “with respect to broker-dealers or anything else.” But Valerie Szczepanik, the SEC’s chief AI officer, is looking at AI tools that can help with enforcement, corporate finance reviews and other areas, Atkins said.

“So whichever way this technology grows and changes, I think we have to be very attuned to those potential problems,” Atkins said.

SSA needs better assessment of data-sharing costs as Treasury program saves millions, GAO says

While a pilot program giving the Treasury Department access to the Social Security Administration’s death data is projected to save the government millions, SSA still needs to better evaluate the cost of collecting those records from states, a government watchdog warned in a new report.

A three-year pilot program with Treasury’s Do Not Pay initiative provides the agency with temporary access to the SSA’s full Death Master File — the compilation of deceased Social Security number holders — to prevent improper payments. States collect death data, and statutes require the SSA to pay them for the records. Federal agencies that also use the data must compensate SSA.

A report released Friday by the Government Accountability Office found that SSA did not comply with requirements when setting compensation rates with states for their death records. Instead, SSA paid each state the same amount for each death record and did not receive information from the states on individual collection costs.

“Without this information, SSA does not know if it is paying too much or too little for states’ data,” the report stated, adding that “SSA’s ability to negotiate appropriate prices with states may also be limited.”

Meanwhile, SSA’s payments to states have more than doubled under new contracts that went into effect in December 2023, the GAO found.

The government watchdog recommended that the agency identify and estimate the “relevant costs” to assess the extent of inconsistencies in its current pricing structure and, in turn, reduce administrative costs and improve efficiency.

Under the current model, government agencies, including Treasury, that receive state death data must pay the SSA a proportional share of the amount paid to states. After $4.6 million in costs, the Treasury Department found access to the DMF helped prevent, identify, or recover about $113.5 million in improper payments.

The government watchdog further determined the SSA’s share of data costs is expected to decrease from 42.4% of the total paid to states in 2024 to 23.1% in 2025, as the agency passes more costs to Treasury and other agencies. In doing so, it changed its cost methodology in 2025, failing to account for agencies’ proportional share of data costs, the GAO said.

In 2025, SSA assigned Treasury to reimburse the federal benefits agency approximately $9.2 million, well above the $6.1 million cap the two agencies agreed to in 2023, according to the report. As of April 2025, Treasury projects the pilot will save about $337 million in benefits over the next three years, but the GAO said the agency needs better cost allocation methods for the agency to make accurate projections.

“Absent a sound cost allocation methodology that is based on considerations related to agencies’ proportional share of costs, agencies, including Treasury, may not be able to make informed decisions about the cost-effectiveness of obtaining access to the full” Death Master File, the GAO wrote.

GAO issued three recommendations to address its concerns, and the SSA agreed with them. This included the SSA commissioner ensuring that the agency’s contracts with states reflect “statutorily authorized costs and include necessary documentation,” and conducting an analysis of state cost information to determine whether a renegotiation of the fee schedule is necessary.

The watchdog also recommended that the SSA commissioner ensure SSA revises its cost allocation methodology.

The report was released just days after President Donald Trump signed a bipartisan bill titled the Ending Improper Payments to Deceased People’s Act, which amends the Social Security Act to permanently authorize the SSA to share the Death Master File with the Treasury’s Do Not Pay System. The bill addressed a prior GAO recommendation on this issue.

State Department is gearing up to ‘roll out agentic AI’

After successfully launching its own internal chatbot and normalizing the use of artificial intelligence tools for translation, summarization and other diplomatically beneficial uses, the State Department is eyeing the next step in its journey with the emerging technology.

“We’re going to roll out agentic AI,” State Department CIO Kelly Fletcher said Thursday during the FedScoop-produced GDIT Emerge event in Washington, D.C. “We’re going to continue to embed AI in our systems.”

The State Department has been a federal leader in AI adoption, reflected in robust use case inventories and a general embrace of the technology at its highest levels. Current tech leaders remain focused on trying to “democratize access to generative AI” throughout the agency, Fletcher said. That likely means that any shift toward agentic AI won’t come with a snap of the fingers.

Still, the department is currently looking to “consolidate and standardize and simplify around commodities,” she said, which could cover everything from end-user devices to help desks.

“It sounds really wonky,” Fletcher added, but “the more you can make it easy for people to do their job, to reduce administrative friction, the better off you’re going to be, right? Part of that is agents. Part of that is consolidation.”

Agentic AI systems are able to create content in the way generative AI can, but agents can take it several steps further by operating autonomously. Having autonomous capabilities means AI agents can complete more complex tasks and make decisions without direction from a human in the loop.

A September 2025 report from the Government Accountability Office’s Science, Technology Assessment, and Analytics division noted that AI agents are currently used in areas such as software development and customer service. But there’s still plenty of maturing the technology has to do: Per the GAO, “a study found that the best performing AI agent tested was only able to autonomously perform about 30 percent of software development tasks to completion.”

Sarah Harvey, who authored the GAO report, said during an SNG Live event last month that it’s “pretty early to tell” how widespread agentic AI use is throughout the federal government, but the “opportunities are numerous.” She pointed specifically to operations and inventory management as areas of high potential for the technology’s usefulness.

Though the tech is in its relative infancy, there’s an expectation among some analysts that agentic AI could be built into a third of enterprise software applications by 2028. State’s 2025 use case inventory doesn’t explicitly list any agentic AI tools, but its workforce is growing increasingly comfortable experimenting with the tech in general.

“I love that people are building their own tools,” Fletcher said, “and they’re doing it in environments that are provisioned with safety, like security in place and with some amount of data available to them, and they’re building the tool that they need. So that’s sort of the overarching practice.”

For some department staffers, StateChat — the agency’s AI chatbot — may have been their gateway to artificial intelligence. State officials have worked toward a mobile version of the chatbot so employees can use it on their government-issued devices.

Fletcher said they’ve conducted training sessions at embassies to show diplomats how best to use the tool and what functionalities could be most useful for their work.

“We can look and see at that embassy, how does use of the tool change after that? And it grows by, you know, orders of magnitude,” Fletcher said.

At the January SNG Live event, a different State Department official revealed how StateChat has already leveled up after its late-2024 introduction. Isabel Rioja-Scott, the agency’s director for AI workforce enablement, said State had “just launched custom GPTs” for the chatbot.

“We’re seeing missions also kind of design their own solutions with these tools in hand,” Rioja-Scott said. “I think there are real challenges that we’re working through. … We need to write rules of the road to use this safely. It should also be enabling. It should be that balance so that we can use these tools effectively for our mission.”

As for Fletcher, she’s focused on other tech priorities for 2026, including the modernization of consular applications, such as visas, passports and an “underlying system” that “needs some work.” But she’s also focused on pushing generative AI adoption and setting the stage for the looming agentic AI revolution.

“My vision is that we’re going to slap AI agents on top of older systems to buy us some time, right?” Fletcher said. “We’re going to prioritize those systems that are, like, really expensive to maintain, lack resilience. We’re going to fix those first.”

HHS reports changes in deputy IT, artificial intelligence leadership

The Department of Health and Human Services made several changes to its IT leadership recently, including the addition of a new acting deputy chief information officer and acting deputy chief AI officer.

A webpage listing leadership within the Office of the Chief Information Officer currently has David Hong as acting deputy CIO and Arman Sharma as acting deputy chief AI officer. Meanwhile, Kevin Duvall, who was previously deputy CIO and acting deputy CAIO, is no longer on the page.

HHS didn’t respond to requests for comment Friday submitted though HHS’s email form and to spokespeople. FedScoop also attempted to contact Duvall separately.

The apparent change-up comes amid reports of a personnel shake-up at the health agency. On Friday, CNN reported that two top aides to Secretary Robert F. Kennedy Jr. were departing and new senior counselors would be installed. Those changes were related to preparations for midterm elections, per CNN.

It is not clear if the IT leadership changes were for similar reasons.

While there is no public indication of when Hong and Sharma began serving as acting deputies, the changes appear to have been made recently. The webpage itself says that it was updated Wednesday, and a version of it cached in the Internet Archive on Feb. 2 still shows Duvall in both roles.

The update also clarifies that Michael McFarland is the acting executive officer in the CIO’s office. He was previously not listed as acting. Together, the changes mean that of the 10 roles listed on the page, seven of them have an acting — rather than permanent — official serving in them.

CBP ramps up surveillance tech without much-needed IT personnel, GAO says

Customs and Border Protection has increased deployments of surveillance technology along the northern border over the past five years despite sluggish hiring levels of IT personnel needed to monitor the tech, according to a report by the Government Accountability Office published Thursday.

The staffing rate for information systems specialists has remained below target levels for half a decade but the gap has widened since 2023. CBP officials pointed to low pay, a lengthy background investigation process, a limited local applicant pool, high cost of living and minimal career advancement opportunities as drivers of attrition and the inability to fill open positions.

GAO conducted the audit over a nearly two-year period, starting in April 2024 and concluding this month. In examining CBP’s northern border facilities, the watchdog found that CBP did not have a strategy to address the critical staffing gap. A senior Border Patrol official in charge of workforce planning said Border Patrol expects those in the information systems specialist role to “leave the agency and look for better career opportunities,” according to the report.

The lack of needed IT personnel is to the detriment of CBP, the auditors found.

“Developing a plan with strategies to improve the recruitment and retention of Law Enforcement Information Systems Specialists would help Border Patrol ensure that it has sufficient personnel with appropriate skills to effectively use northern border surveillance technology,” GAO said in its report.

While low rates of key IT support staff presents a critical roadblock for CBP, the Department of Homeland Security division faces other challenges as well.

Some of the surveillance technology, such as automated sensors, aren’t built for cold weather, GAO found. With the ground near the northern border remaining frozen for a significant part of the year, officials said agents aren’t able to receive or access that data. CBP is also dealing with weak communications technology and infrastructure, making it more difficult to deploy surveillance tech.

CBP officials cited challenges with information and data sharing across facilities and other law enforcement agencies as another hindrance limiting operations.

The auditors provided DHS with a draft of the report for review prior to its publication. DHS agreed with the recommendation to develop a recruitment and retention strategy for its key IT support staff. GAO said the agency identified steps it plans to take to ameliorate the situation.

“For example, DHS noted that Border Patrol is offering training opportunities to existing Border Patrol processing coordinators so they can fill Law Enforcement Information System Specialist positions, and that Border Patrol is planning to analyze the feasibility of retention incentives for this position,” GAO said in its report.

IRS moves operations employees to tax services for filing season amid workforce cuts

The Internal Revenue Service moved forward this week with plans to involuntarily move employees with no direct tax experience to perform customer service and analysis duties for this year’s filing season.

According to email notices obtained by FedScoop, multiple IRS employees from the agency’s IT and human capital office were informed Monday that they were assigned to a 120-day involuntary detail to the agency’s Taxpayer Services division, as either a customer service representative or a tax examiner. The detail, effective Feb. 22, could be extended beyond the four-month period, per the notice.

Joseph Ziegler, the agency’s chief of internal consulting, stated in the notice that neither position will require direct engagement with taxpayers or answering phones, adding that the tax filing season is the “most important time” of the year for the agency.

It is unclear how many employees were affected by the temporary reorganization, but it follows a series of shakeups and losses for the agency. Among those impacted were IT employees who were among a group of about 1,000 staffers abruptly moved from the IRS’s Office of the Chief Information Officer to the Office of the Chief Operations Officer last December.

“How do you think Joe Taxpayer will feel about people with no tax experience handling their taxes?” one IRS IT employee, speaking on the condition of anonymity, told FedScoop.

Other employees are taking the task in stride. One non-IT staffer impacted by the reassignment said the move is “ultimately to help support taxpayers, “no matter where we work.”

Monday’s notice stated that training will begin Feb. 23, and employees can expect to receive FAQs for “common questions.” The agency sent another notice to management Thursday, stating that these supervisory roles will receive about nine weeks of instructor-led training, followed by four weeks of on-the-job instructor support

“We are glad to have you join us and look forward to your contributions to the One IRS Initiative as you assist with working taxpayer inventory,” the Thursday notice, obtained by FedScoop, stated.

The IRS did not immediately respond to FedScoop’s request for comment.

Just a few weeks ago, the Treasury Department’s watchdog issued a memo to the IRS commissioner stating that the IRS’s cuts over the past year had returned staffing to October 2021 levels, and warned that the agency’s IT and modernization projects could be delayed as a result.

According to the Treasury Inspector General for Tax Administration, the IRS lost about 19% of its staff as of October 2025, including 8,300 workers responsible for filing functions like returns processing, customer service, and updating computer systems. This included 16% of the IT workforce, which would have contributed to system updates to account for inflation and expiring or new tax provisions, the IG added.

It remains to be seen whether the latest movement will further delay modernization efforts and increase the backlog of tax returns awaiting processing.

“This takes the IT staff [who] know the background and could be supporting those efforts even further away from being able to complete those tasks,” an IT staffer said Thursday.

FedScoop’s Matt Bracken contributed reporting.