Secret Service ditches Anthropic’s Claude

The Secret Service has removed Anthropic’s technology from its workflows to comply with a directive from President Donald Trump requiring federal agencies to ditch the Claude-maker within the next six months.

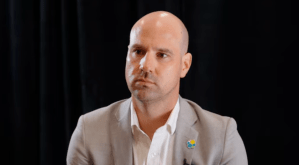

The Department of Homeland Security component had used Anthropic’s Claude models for code generation, a focus area for many organizations, according to Secret Service CIO and Chief AI Officer Chris Kraft.

“That application does have the ability to leverage Claude models … but they’re easy to change out,” Kraft said. “There’s a whole list of a bunch of different models that you can choose from, and we will follow the guidance and leverage other models.”

The Secret Service joins a growing group of agencies that are phasing out Anthropic’s technology following the company’s clash with the Department of Defense in late February.

Software developers at the Treasury Department had been using Claude Code, though Secretary Scott Bessent said last week that the agency is terminating use. The Office of Personnel Management, NASA, the Commerce Department, the General Services Administration and Department of Health and Human Services are untangling Anthropic from AI use cases, if they haven’t stopped using Claude already.

The Anthropic-DOD situation has illustrated the potential disruption that can come from relying on one vendor’s AI technologies.

“No single AI vendor is too big to fail,” tech industry analysts from advisory firm Gartner said in a research note published in late February.

Like the Secret Service, other agencies have decoupled specific AI models from applications, easing the transition from one model to the next. FedScoop previously reported that the State Department’s generative AI chatbot, for example, could run on multiple models, and Claude was one of the options. When tasked with removing Anthropic, organizations that have built flexibility into their platforms can remove access to Claude without taking down the entire system, similar to GSA’s USAi.gov platform.

As the fallout continues, Kraft said he has been thinking about the importance of redundancy and proper testing. The challenge for technology leaders isn’t the availability of different AI solutions, but rather, knowing what models to use for which use case.

“Continuing to evaluate what’s available and what’s a good application of the different models and having that to fall back on in case something’s not available [or] the landscape changes” is critical, according to Kraft.

Federal IT leaders that stay up-to-date and test new models to get a sense of how the technology would behave in real-world workflows will have an easier time making a switch.

“You have to be able to test and have confidence in what the output is going to be, whether it’s a generative AI model, facial recognition model, license plate reader or whatever it happens to be,” Kraft said. “That’s such an important part of being successful.”