Senate’s new AI caucus will work toward ‘responsible policy’

A bipartisan group of senators announced Wednesday that it’s launching the Senate Artificial Intelligence (AI) Caucus.

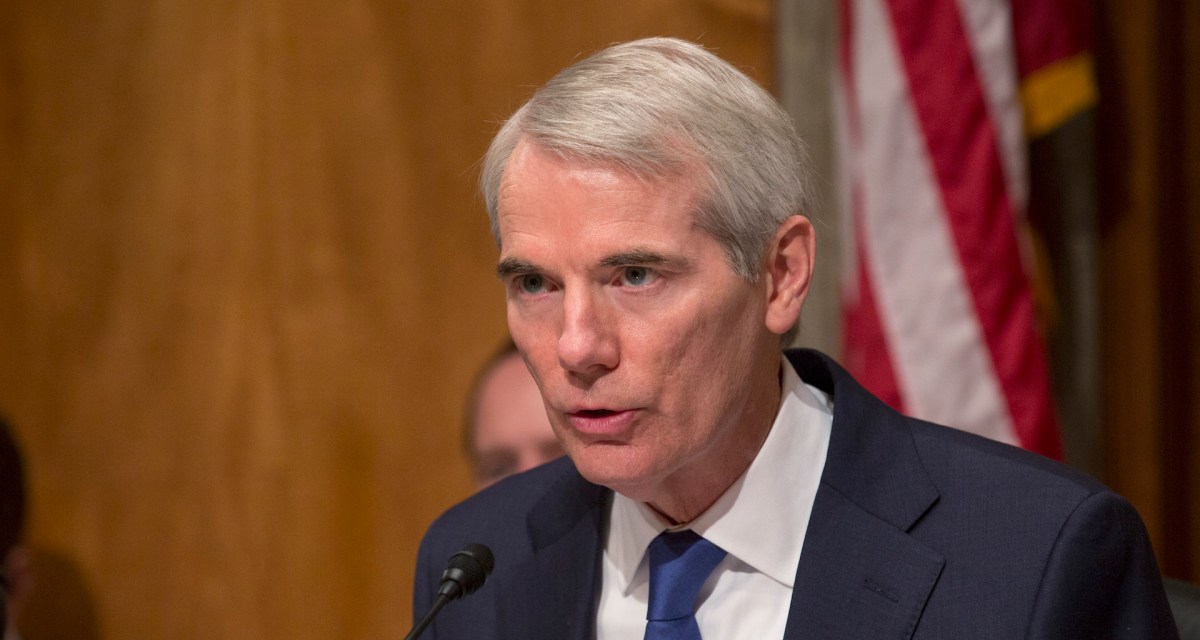

The caucus, comprised of Sens. Martin Heinrich, D-N.M., Rob Portman, R-Ohio, Brian Schatz, D-Hawaii, Cory Gardner, R-Colo., Gary Peters, D-Mich., and Joni Ernst, R-Iowa, will help members of Congress and their staff connect with outside artificial intelligence experts.

“AI is likely to be one of the most transformative technologies of all time,” Portman said in a statement. “AI is a mix of promise and pitfall, which means, as policymakers, we need to clear-eyed about its potential.” The caucus will work to make sure that “Congress is home to the substantive conversations necessary to make responsible policy about emerging technology and ensure AI works for, and not against, American citizens and U.S. competitiveness,” he added.

The formation of the caucus comes about a month after President Donald Trump signed an executive order outlining his administration’s AI national plan. The “American AI Initiative” lays out a “multi-pronged approach” to the challenge of continued American leadership in this technology. It directs agencies to prioritize AI in their research and development budgets, make data available to researchers, create training programs to help workers gain AI-relevant tech skills and more.

Congress has faced various questions about the implications of AI-powered technologies over the past year. For example, in July, the American Civil Liberties Union published a study that put sitting members of Congress in the crosshairs of misidentification by facial recognition technology. Using Amazon’s Rekognition software, the group compared a photo set of sitting members of Congress to a database of publicly available arrest photos. The software, according to ACLU, incorrectly identified 28 sitting members of Congress as individuals who have been arrested. Lawmakers, predictably, had some questions.

Amazon argued that the study is actually misleading, and that ACLU used incorrect settings to get their results. “Machine learning is a very valuable tool to help law enforcement agencies, and while being concerned it’s applied correctly, we should not throw away the oven because the temperature could be set wrong and burn the pizza,” Matt Wood, AWS’ head of machine learning, wrote in a blog post. “It is a very reasonable idea, however, for the government to weigh in and specify what temperature (or confidence levels) it wants law enforcement agencies to meet to assist in their public safety work.”

The task of weighing in remains ahead — the senators indeed say they will consider how AI developers can maintain “important ethical standards.”