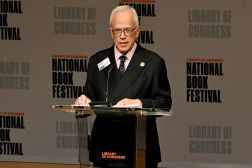

Congressional AI Caucus leader Ted Lieu says most AI should not be regulated

Rep. Ted Lieu, D-Calif., said Thursday that most artificial intelligence will likely not be regulated by the government, but in cases where it could harm or even kill people, it will be needed.

“My analogy from the perspective of a lawmaker is that most of AI we’re not going to regulate,” Lieu said during a Washington Post Live event Thursday on the Future of Work, pointing out that most AI technology like smart toasters for bagels or other seemingly innocuous uses of AI will not need to be regulated.

However, Lieu — a key member of the Congressional AI Caucus and one of three members of Congress with a computer science degree — AI tools that could hurt or kill individuals, like those baked into planes, trains, cars, and other sectors where human life is at risk, will need regulation. And because of that, the federal government will need more AI-trained regulators who are more attuned to the unique aspects of AI in those fields.

“So, think of two bodies of water: a large ocean of AI, and then this small lake of AI. So this large ocean is all the AI we don’t care about… The small lake of AI is AI we might want to think about. And to me, there’s three buckets [in that small lake]. The first is … AI that can destroy the world. Second is AI that isn’t going to destroy the world but can kill you individually…. And that last bucket, which is really the hardest, is AI that has some sort of harm to society,” said Lieu.

He added that the most difficult uses of AI to control or regulate are AI that can subjectively harm parts of society through unfair monetization, AI algorithms that discriminate or have bias, or AI-driven facial recognition.

As a solution, Lieu pointed to bipartisan legislation he introduced in June, which proposed Congress create an AI blue-ribbon bipartisan commission to make policy and legal recommendations to Congress on how best to regulate AI.

Earlier this year, Lieu introduced the first measure in Congress that was written entirely by the generative AI tool ChatGPT: a nonbinding resolution on how to comprehensively regulate AI in Congress. Lieu’s office is also one of the first not to set restrictions on the use of ChatGPT for internal functions, the California congressman said.

FedScoop first reported in April that the House of Representatives’ digital service had obtained 40 licenses of ChatGPT Plus, the first publicized congressional use of the popular AI tool. House offices said they were using ChatGPT for generating constituent response drafts and press documents, summarizing large amounts of text in speeches, and drafting policy papers or, in some cases, bill language.

Lieu has highlighted in the past that federal agencies need to be given the power and resources to better tackle the risks and concerns associated with AI, which he hopes his proposed blue-ribbon commission could help with.

“So I think we need to get more regulators in our federal agencies who are more cognizant and attuned to the unique risks and aspects of AI,” Lieu said in June.