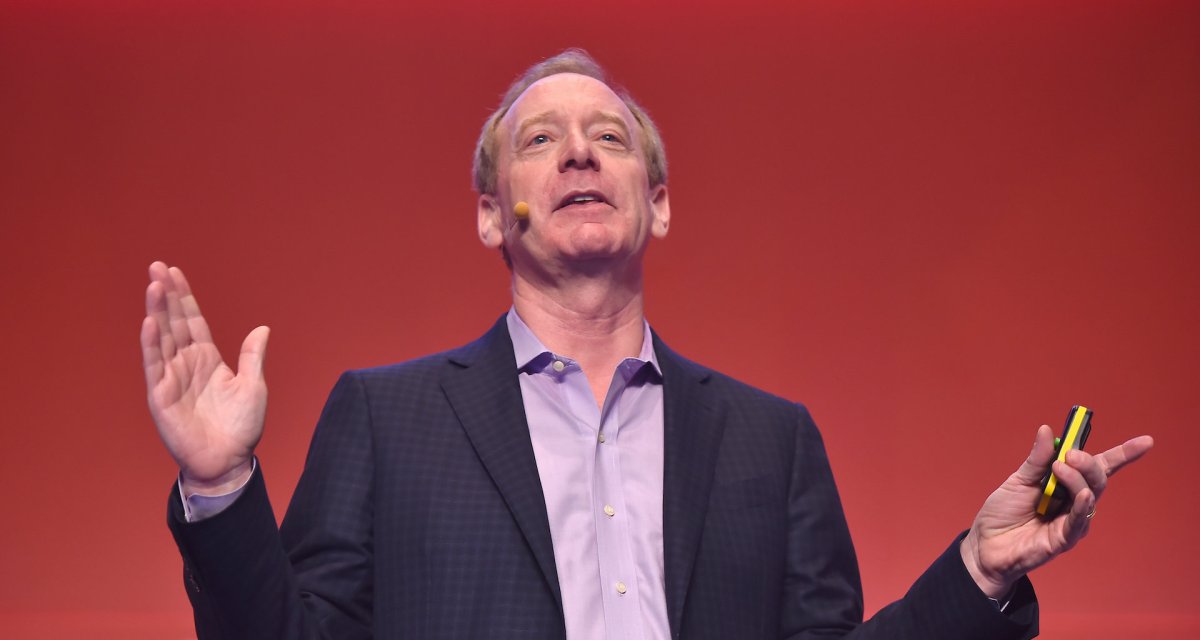

Microsoft president won’t commit to moratorium on sale of facial recognition to federal law enforcement

When asked Thursday for a firm answer about selling facial recognition technology to federal law enforcement, Microsoft’s president promised only that his company won’t provide it in scenarios that lead to bias against minorities and women.

Brad Smith, in an interview on Politico Live, addressed nationwide protests of systemic racism and Microsoft‘s decision to wait until a federal law is passed regulating facial recognition before resuming sales to police.

Microsoft doesn’t currently sell facial recognition to any federal law enforcement agency, Smith said, before echoing a recent point made by such agencies that most have missions that extend beyond law enforcement into national security.

“We won’t allow our technology to be used in any manner that puts people’s fundamental rights at risk,” Smith said. “Now I will say, look, the federal government is a broad government. There’s a lot more than law enforcement that’s involved.”

For that reason, the government needs to begin a “more thoughtful conversation” on a facial recognition law, he added.

The American Civil Liberties Union released emails Wednesday — obtained as part of a lawsuit against the Drug Enforcement Administration — detailing Microsoft’s efforts to sell, pilot and train personnel to use its facial recognition product between September 2017 and December 2018.

As of November 2018 the DEA piloted, but hadn’t purchased, Microsoft’s product due to criticisms of the FBI‘s use of similar technology, according to the emails.

“Even after belatedly promising not to sell face surveillance tech to police last week, Microsoft has refused to say whether it would sell the technology to federal agencies like the DEA,” said Nathan Freed Wessler, senior staff attorney with the ACLU’s Speech, Privacy, and Technology Project, in the announcement. “It is troubling enough to learn that Microsoft tried to sell a dangerous technology to the U.S. Drug Enforcement Administration given that agency’s record spearheading the racist drug war, and even more disturbing now that Attorney General Bill Barr has reportedly expanded this very agency’s surveillance authorities, which could be abused to spy on people protesting police brutality.”

Of the three companies to make announcements limiting the sale of facial recognition, Microsoft was the only one to send algorithms to the National Institute of Standards and Technology for review. The algorithms, reviewed in 2018, were broadly the most or second-most accurate depending on the performance metric among submissions from about 150 companies.

Washington State passed the first law in March limiting state and local agencies’ use of facial recognition. Providers must allow product testing, and police must obtain a warrant to use the technology in investigations barring an emergency.

The law could serve as a model for other states and the federal government, Smith said.

“We need to start teasing this issue apart to understand it better and move just beyond a binary conversation of ‘permit it or ban it’ and think about what is the right way to regulate it,” Smith said. “That’s where most progress ultimately comes.”

Former acting Director of National Intelligence Richard Grenell tweeted on June 12 that Microsoft should be barred from federal contracts in response to the company’s moratorium on facial recognition sales to police. President Trump retweeted it.

https://twitter.com/RichardGrenell/status/1271303615206928384

Smith said he hasn’t, yet, conversed with the president since.

“It was a tweet, and we’re focused on trying to do the right thing every day,” Smith said.

“We recognize that there will be issues on which we agree and on issues we disagree, and we never make anything personal,” he added. “We always try to stand up for principles, and we always want to maintain a constructive level of conversation.”