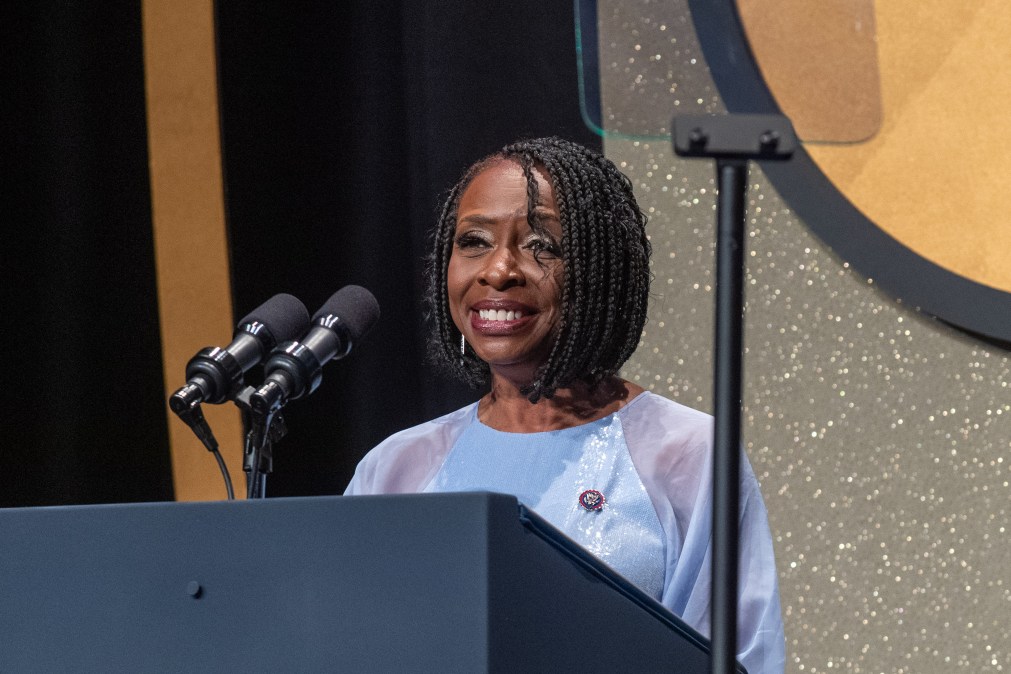

With House AI task force, Rep. Yvette Clarke is ready to ‘get to the table’

Earlier this week, House Speaker Mike Johnson, R-La., and Minority Leader Hakeem Jeffries, D-N.Y., finally announced the creation of a new bipartisan task force on artificial intelligence. While a similar initiative had been underway with the previous Republican leadership, and there are at least two AI-related efforts on the Democratic side, the effort marks a major step forward in efforts to legislate with representation from both parties.

Rep. Yvette Clarke — a Democrat from New York who was one of the first members of Congress to call out the potential harms of artificial intelligence — said in a recent interview that she hopes the task force, which now counts her as a member, can focus on the basics of the technology, including data privacy and algorithmic bias. She emphasized that she’s “not happy about the time it’s taken” for legislators to pay attention to the issue, which has seen increased focus in the aftermath of the White House’s executive order on the technology and Senator Chuck Schumer’s AI insight forums.

“At the end of the day, we’re still waiting for legislation to be passed. We have already done the data privacy bill and that bill has been waiting in abeyance for quite some time,” Clarke told FedScoop, which has been speaking with House members focused on regulating the technology. “Let’s all get to the table. Let’s unpack what’s before us and let’s talk about how we legislate what needs to be dealt with in this session and into the next.”

Notably, Clarke has already put forward several proposals related to artificial intelligence, including a bill that would ban biometric technologies in public housing, another to mandate companies to implement bias assessments for AI technology used to make critical decisions, and legislation to curb the risks created by deepfake technology.

In an interview, the congresswoman discussed her expectations for the new AI task force, her current worries with AI technology, and the prospects for new regulation. At the same time, she expressed concerns about relying on voluntary commitments from AI companies, which may also try to influence the emerging process for developing legislation. And she pointed out that the lawmakers, in many ways, have yet to act on some of the core issues underlying the challenges raised by the technology.

“We are working to make sure that our agencies have resources to do the work [and] that our corporate partners understand that they have a moral obligation — aside from a financial incentive — to do right by the American people,” Clarke said. “Our ability to excel as a nation is tied to getting this right.”

Editor’s note: The transcript has been edited for clarity and length.

FedScoop: You’ve had proposals for dealing with deepfakes and auditing AI tech, and now you’re joining this bipartisan AI task force. I’m curious: are you happy with the progress Congress has made on this issue since you first started following this?

Rep. Yvette Clarke: I’m not happy about the time it’s taken. There were indicators years ago — quite frankly — that without this technology having protocols and guardrails, it could be harmful to the American people. It was already harming people. I’m happy, however, that we’ve come to this point where the Speaker and the Leader have appointed members to do this work so that we can protect the American people, while at the same time embracing this new innovative technology.

FS: Are there specific issues or specific pieces of legislation that you’re optimistic about?

YC: My hope is that we’ll look at the whole ecosystem, from beginning to end. I think it’s important that we all have a similar baseline from which we’re working. I, for a long time, have been advocating for data privacy, which is really to me at the foundation of AI. I also was very concerned about bias and algorithms, which is another component. I’m hoping that we will delve into those areas, as well as look at … what we need to do in terms of rules of the road for it to be a healthy ecosystem.

FS: There’s a lot of discussion about funding AI research and making sure the U.S. is competitive. At the same time, you have focused on some of the nefarious and negative consequences of the technology. How do we not let the first distract from the latter?

YC: The capabilities are a really important component of this. Right now, we’re not making major investments in AI research. I’m certain, if you bring academia to the table, a lot of the research has already been done. The issue for us is a lack of knowledge — quite frankly — or varying levels of knowledge. Establishing that baseline is going to be critical for all of us. And at that point in time, we can determine what type of investments we need to make. But so much of this is in the corporate domain. We’ve got to have a clear understanding of exactly how the current construct has been growing organically.

FS: How concerned are you about lobbying or the influence of firms like OpenAI and Microsoft in shaping the foundation of what AI legislation looks like in the United States or regulations at federal agencies?

YC: That’s always gonna be a component of legislation, right? The question is, as legislators, whose interests are we looking out for? I’m at the table for the American people. At the end of the day, we won’t know what we don’t know until we get to the table and have those conversations. There may be members who are coming to the table already carrying an agenda for those interests that would like to shape this.

FS: You’ve expressed concern about the voluntary aspect of some of the recent AI policy announcements. Why does that worry you?

YC: At the end of the day, companies have a profit motive. There may be blind spots that they’re not taking into account. Typically, what we’ve experienced in our society is that it’s a lot less expensive to be preventative than it is to find a cure. Whenever there’s a cost, it’s always passed on to the consumer. It’s critical that we not be wholly owned subsidiaries of those who would say that they’re voluntarily taking into account our findings or who are proceeding us with new protocols. We need to factor in everything that’s taking place and determine whether, in fact, it’s in the best interest of the American people.

FS: What do you make of some of the leadership of those companies that talk about artificial general intelligence or a super smart AI, in a dystopian way, destroying everything?

YC: We can all speak to the potential of what AI can and cannot do. However, at the end of the day, it’s critical that we not get ahead of ourselves, but that we, again, build a strong foundation to assess how those harms can manifest themselves. What we need to do to protect ourselves. AI is a growing industry. There’s certain things that are taking place today that will be at the core of how this technology proceeds into the future.

FS: You were very early in calling for regulation of facial recognition — a form of AI — and in calling for a ban of facial recognition in public housing. Where does the conversation on regulating that technology stand right now in Congress?

YC: There hasn’t been a real hearing or a real discussion around it … Facial recognition is being used at airports, a whole host of other venues, and even Madison Square Garden … I don’t have the impression that that conversation is taking place because, particularly, law enforcement entities are purchasing this technology without the integrity of it being questioned.

FS: I’m curious about the extent to which you’re worried about AI-generated content this election season?

YC: I have been sounding the alarm for quite some time. We’ve seen already AI-generated political ads or political statements being put out there. Some of them disclose that they’re AI generated and others have not. Clearly, this is a major, major, major concern given the contentious nature of our politics … There’s nothing that really stands in the way of that, other than sort of the goodwill of some of the platforms that said that they will be monitoring and requiring disclosure.

FS: I’m wondering if you think federal agencies are doing enough to deal with AI within the regulatory authority they already have?

YC: Certainly the FCC, CISA, and Homeland Security are very aware … It’s a huge, huge endeavor on many different levels because each federal agency has a sort of a different level of engagement around cyber protocols. That creates vulnerabilities.

This is that moment I’m hoping that we begin a bipartisan conversation around how we protect the American people, how we maximize on the federal enterprise in doing so, and what regulatory regime will be required, if any. I’m certain there will be some, given the Wild West nature of the current state of play. We are working to make sure that our agencies have resources to do the work [and] that our corporate partners understand that they have a moral obligation — aside from a financial incentive — to do right by the American people … Our ability to excel as a nation is tied to getting this right.

FS: How are you thinking about dealing with AI that’s built and created in other countries where we don’t have regulatory authority?

YC: What I’m hoping we can accomplish is that we become a leader in this space [and] that we maintain our leadership in the space. When the U.S. has protocols in place, typically, they become universally adopted. However, we can’t regulate what others do in other nations. They have their own sovereignty. I can tell you that currently, particularly in Europe, they are moving ahead forcefully and protecting their civil societies. In that regard, we are a bit behind the curve.

FS: So my final question is: what AI issue is worrying you most right now?

YC: There are some foundational areas that we have not strengthened and that we have not established guardrails around that, baked into the culture of AI, will be devastating, that will have devastating impacts on our society, on our way of life. I’d want to see us do much more around data privacy.