Trump nominates new chief of federal procurement policy

Michael Wooten is President Donald Trump’s pick to serve as administrator of the Office of Federal Procurement Policy.

In heading the office, under the portfolio of the Office of Management and Budget, Wooten would essentially serve as the government’s chief acquisition officer. IT acquisition is a major element of the job, which is subject to Senate confirmation. Trump announced the nomination Wednesday.

Most recently, Wooten has served as senior adviser for acquisitions at the Department of Education’s Federal Student Aid office. Within Education, he also was acting assistant secretary and deputy assistant secretary for career, technical, and adult education. Prior to that, he was deputy chief procurement officer for the District of Columbia Government.

If Senate-confirmed, Wooten will be the first OFPP administrator to lead the office in an official capacity since the Obama administration. Anne Rung left the position in September 2016 to join Amazon, and since then, Lesley Field has filled the position in an acting capacity.

OMB sets deadline for agency FOIA interoperability plans

The White House Office of Management and Budget is asking agencies to get their National FOIA Portal interoperability plans in order.

The FOIA Improvement Act of 2016 required that OMB and the Department of Justice simplify the Freedom of Information Act landscape by creating a central portal that anyone can visit to submit a request to any agency. Today, that portal lives at FOIA.gov.

But not all agencies have established interoperability between FOIA.gov and the agency’s existing FOIA platform. Deputy Director for Management Margaret Weichert sent a memo Tuesday setting a deadline of May 10, 2019, for agencies to submit a full strategy for how their in-house platform, which can range from a simple spreadsheet to an automated case management system depending on agency need and resources, will play nice with FOIA.gov.

Not all agencies are linked to FOIA.gov. (Screenshot)

The memo lays out the two ways agencies can achieve interoperability — either by accepting requests through a structured application programming interface (API) or by accepting the request as a formal, structured e-mail to a designated email inbox. If an agency has an automated FOIA system, OMB says, it needs to go the API route unless otherwise agreed.

In any case, though, agencies have until May 10 to let OMB know which option they will pursue, how long it will take and what it will cost. From there, deadlines are a little less clear but the memo states that email interoperability should be in place “as soon as technically feasible,” and API interoperability available within two fiscal years.

In addition to ensuring this interoperability, agencies have a few other responsibilities that have to do with the FOIA.gov portal. Per the memo, agencies are required to maintain an account on FOIA.gov and keep agency contact information up to date there. Agencies are also required to “maintain a customized FOIA request form tailored to its own FOIA regulations.”

DOD clarifies security requirements to compete for $8B back-office cloud

Eventually, the contractor that will lead the Pentagon’s single-award, $8 billion back-office cloud acquisition will need to be able to store and process Secret-level information. But it’s OK if the vendor hasn’t yet achieved that capability at the time of the award, the Pentagon clarified this week.

The two-phase vision for the Defense Enterprise Office Solution is to provide communication, collaboration and other back-office tools via a hybrid-cloud model to DOD users, first within U.S. territories and then outside. The entire solution will need to have DOD impact level 6 capabilities, meaning it has met security requirements to handle Secret information.

But the need for those Secret-level capabilities is a bit down the line. And during the buildout of DEOS phase one — which deals with unclassified networks in the continental U.S. — the chosen cloud vendor needs to meet only impact level 5 security requirements, for storing and processing controlled unclassified information for national security systems.

“At this time we anticipate that the final RFQ will reflect that an offeror will not be required to possess IL6 at the time of the award in order to be eligible for the award,” says the Q&A, posted as an amendment to the DEOS draft solicitation.

“Candidates must have a certified Impact Level 5 (IL5) offering for infrastructure, platform, or software as a service approved requirement to successfully compete,” it says. “Market research indicates that there are sufficient vendors with DoD Cloud Computing (CC) Security Requirements Guide (SRG) Impact Level 5 (IL5) to facilitate competition and ensure timely delivery of the first phase of delivery – services for non-classified networks in the continental United States.”

For now, the draft solicitation doesn’t explain how a cloud provider that is level 5-compliant will show that it’s on track for level 6 compliance down the road.

As the Q&A explains, there’s a crowd — albeit a small one — of vendors who meet level 5 requirements: Amazon Web Services, IBM, Microsoft and Oracle. But from that group, only AWS has achieved level 6. Microsoft has said it could do so sometime in 2019.

This model is the same the DOD will take with its Joint Entperise Defense Infrastructure (JEDI) cloud, first requiring level 5 compliance and then level 6 down the line. That enterprisewide, commercial cloud, however, will be the anchor of DOD’s move to adopt next-generation cloud capabilities, it revealed last week in its cloud strategy.

ID.me brings virtual identity proofing to the VA

There’s an 81-year-old veteran living in Japan who’s been trying, and failing, to sign up for a Veteran ID card online — he can’t seem to verify his international phone number. There’s a 19-year-old service member trying to get access to his GI Bill Benefits through VA.gov, but the agency can’t confirm his identity because he doesn’t have any credit history.

There are many more veterans just like these.

Traditionally, veterans who have been locked out of the convenient online registration process that the Department of Veterans Affairs offers, which requires things like a stable address and a credit history, are unable to sufficiently prove their identities online. So, they’re forced to go to a VA field office in person, an extra hurdle that can prove to be a complete roadblock, depending on the veteran’s circumstances.

Now there’s another option — Virtual In-Person Identity Proofing enabled by digital identity provider ID.me. After a four-month pilot, ID.me and the VA announced the official launch of this digital service Tuesday.

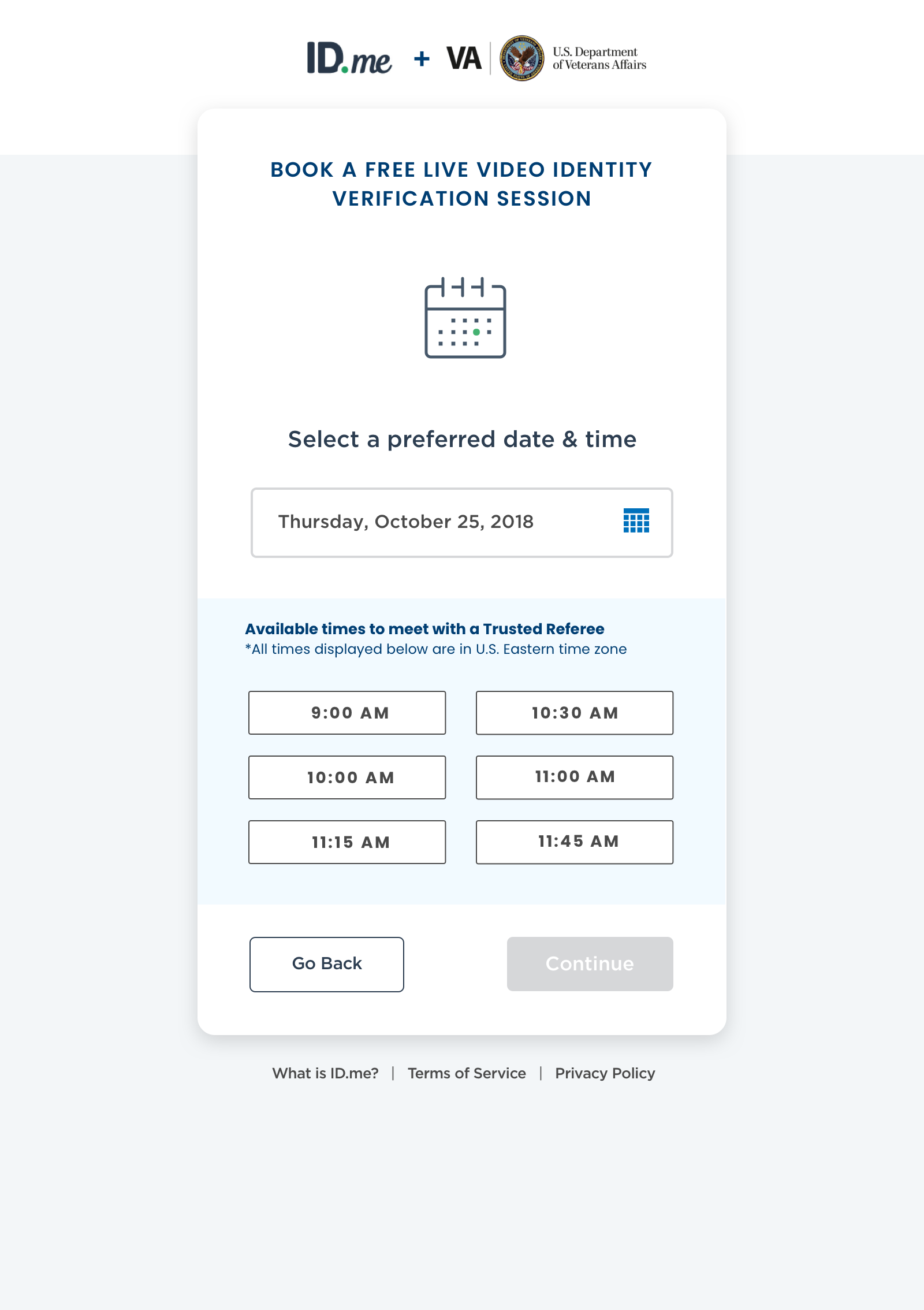

The interface for Veterans setting up a virtual identity proofing session. (Courtesy photo)

The technology is essentially what it sounds like — veterans who need help verifying their identities online can set up a virtual meeting (via video chat) with a “trusted referee” who will examine various identity documents in much the same way as an employee at a VA field office would.

“As part of the digital modernization of the VA, it is important that all Veterans can securely access their benefits and services online,” Charles Worthington, CTO at the VA, said in a statement. “Adding Virtual In-Person Identity Proofing is a critical step towards making sure that no Veteran is left behind.”

As is often the case in IT modernization, it was a policy development, not a technological one, that got us here. The National Institute of Standards and Technology is in charge of developing digital identity guidelines for the federal government — in June 2017 the agency released special publication 800-63-3, which outlines the requirements for verifying identity virtually. ID.me, a recipient of NIST grant funding, developed its tech offering around these guidelines.

To ID.me founder Blake Hall, a former soldier himself, helping veterans is especially meaningful. But he sees a future for this product at agencies beyond the VA. “Everyone runs into this problem where thin-file customers, international customers, aren’t able to prove their identity,” he told FedScoop. “We can also expand this to other agencies.”

Pentagon unveils strategy for military adoption of artificial intelligence

The Department of Defense issued an unclassified summary Tuesday of its strategy for the accelerated adoption of artificial intelligence for military applications.

Then-Deputy Secretary Patrick Shanahan approved the classified strategy in June 2018. The summary further details many things DOD has revealed since last June, like the Joint Artificial Intelligence Center (JAIC), which is the focal point of the strategy.

The unclassified document emphasizes the “urgency, scale, and unity of effort” needed to make AI transformational both for the DOD and the nation. In that respect, CIO Dana Deasy told reporters Tuesday, it works in step nicely with the AI executive order President Donald Trump issued Monday, introducing the administration’s plan for American leadership in the development of artificial intelligence.

Perhaps the most central theme throughout the strategy summary, Deasy said, is “the need to increase the speed and agility, which is how we will deliver AI capabilities across every DOD mission.”

“We do not have time to waste,” he said. “We must be careful not to give a longterm advantage to our adversaries.”

Operationalized AI with JAIC

The Pentagon’s AI strategy is all about near-term, departmentwide operational capabilities supported through JAIC.

Lt. Gen. Jack Shanahan, JAIC’s first director, explained that his office underscores the importance of transitioning from research and development to operational-fielded capabilities. The JAIC will operate across the full AI application lifecycle, with emphasis on near-term execution and AI adoption.”

As revealed previously, JAIC is focused on “national mission initiatives” (NMIs) — which are broad, cross-functional programs that impact more than one mission or agency — as well as “component mission initiatives” (CMIs), which “are specific to individual components who are looking for an AI solution to a particular problem,” the general told reporters.

Already, JAIC is piloting two NMIs — one focused on predictive maintenance and another on humanitarian assistance and disaster relief. Others are in the works, such as a project with “the U.S. Cyber Command on a “cyberspace-related NMI,” Shanahan said.

From these projects, it is DOD’s intention to establish “a common foundation enabled by enterprise cloud with particular focus on shared data repositories, reusable tools, frameworks and standards, and cloud and edge services,” he said.

That’s, for example, why one of DOD’s first NMIs is focused on disaster relief, particularly identifying fire lines in wildfire disasters — not something you might associate with DOD.

“This a perfect example of us reaching out and working with other agencies. … When you actually get down to the real science of what we’re doing with this humanitarian one — it’s taking large, large areas using video, using still, and using AI to determine what is going on,” Deasy said. “A lot of this is going to be applicable elsewhere in the Department of Defense. In picking that as an NMI, we are also picking this as a great one where we can build the tools — we talk about tools that are going to end up inside of JAIC as an asset that others can use inside the Department of Defense. This is a perfect example of one that can help us build out our tools.”

The DOD is massive, however, and of course military services and other DOD components will explore AI for their own needs. This is where CMIs come in to play, and JAIC hopes to synchronize those disparate DOD AI activities and aligning them for enterprisewide efficiencies.

Deasy said JAIC’s level of success will be measured in its ability to attract those components to take advantage of its resources.

“At the end of the day, no matter who is standing up an AI capability, they’re going to need governance, they’re going to need processes, they’re going to need tools, they’re going to need infrastructure,” he said. “So what we’ve said is JAIC’s success is going to be based on our ability to create common tools, common processes, common development methodologies, common infrastructure where you can get jumpstarted a lot faster than going out and starting something on your own. The services are really keen on that.”

The strategy also comes with the stipulation that any DOD AI project that costs more than $15 million must be vetted by JAIC first.

There are also other research entities, like DARPA, that deal with AI, but in the longer-term. Deasy said JAIC’s role will be to feed off that R&D to bring real, immediate applications to the military.

“This needs to be a balanced conversation” between R&D and operation, he said. “What JAIC is focused on is the actual applied application of taking all that science that’s available from either the academic world or the commercial world and then applying it to real-world solutions. But then keeping tightly linked to the research side of where this is going, where the art of the possible is going to take you.”

But JAIC is still in its early days, Deasy said. Though it’s up and running with some work underway, JAIC started small — the big transformation and scaling of the organization will take place in its second year, he said.

So far, it’s received less than $90 million in funding, most to research and development, and is staffed by military detailees. The hope is that the fiscal 2020 budget will really reflect the growth strategy of JAIC.

The ethics of militarized AI, the Google problem and autonomous weapons

The biggest piece of the DOD AI strategy still missing is a set of ethical principles — but it’s in the works. Though DOD is moving forward standing up JAIC and launching mission initiatives, the Defense Innovation Board (DIB) is in the process of developing ethical principles for the department’s use of AI.

Some companies have been tentative to work with DOD on AI and other high-tech initiatives because of the possibility that their tech could be used for lethal applications. Google is the poster company for this turmoil after it stepped away from work supporting DOD’s Project Maven, which uses algorithms and AI to help Air Force analysts make better use of full-motion video surveillance.

“I think it’s very important the Defense Innovation Board is doing this,” said Shanahan, who has led Project Maven for the past two years since its inception. “It doesn’t stop us from going forward. This is an important point … in every technology the department has ever introduced, we take this into account early and often. The idea of human judgment, safe, lawful use of any weapons system, and then, as important as anything else, is the issue of accountability. A human is held accountable. … We are taking this into account from the day we start working on any project.”

Deasy said the work on the front end of DOD adopting AI is too urgent and intensive to wait for the DIB’s development of principles. “There is a lot of work we have to do to stand up a joint artificial shared capability. So we can keep moving down the road setting that up while the DIB works through those big questions that they’ll eventually bring back to us with their thoughts on.”

While Google’s refusal to continue work with Project Maven, and then its decision to not bid on DOD’s Joint Enterprise Defense Infrastructure (JEDI) enterprise cloud, has gotten a lot of public attention, Deasy and Shanahan said that has been the exception, not the rule.

“Our experience has been, with very few exceptions, an enthusiasm about working with the Department of Defense,” Shanahan said, explaining the importance of being upfront with companies about the application of their technologies to the DOD mission. “There’s questions we ask in that problem framing to make sure we all understand on both sides of the line what they’re working on for the Department of Defense, what the Department of Defense intends to use that model for and we haven’t had any real problems with that.”

Deasy said the DOD accepts that some companies don’t want to work with it. “We’ve got some of the most difficult, challenging and important problems to solve. So far, we’ve seen no evidence that there is something we’re missing out on if a company chooses not to participate in our mission sets going forward. We are getting lots and lots of inquiries.”

That tune, however, may change the closer DOD gets to deploying futuristic things now only seen in movies and TV shows. Shanahan said the department’s adoption of AI doesn’t involve autonomous weapons “right now.”

“And that’s what people get most skittish about what the department is or is not doing,” he said, noting that the Pentagon has an existing directive for autonomous weapons. “We are taking those policies into account, but we haven’t progressed to the point in the JAIC or in Maven where that has become the driver of whether or not we go forward with a project. We are nowhere close to the full autonomy question that most people seem to leap to a conclusion when they think about DOD and AI.”

Deasy didn’t count out the possibility of it either.

“We want JAIC to support the full breadth of what the Department of Defense needs to do to accomplish its missions — if that’s deterrence, if that’s lethality, if that’s reform, if that’s creating better alliances and partnerships,” he said.

GSA and NGA award contracts for ‘earth observation’ technologies

The General Services Administration announced Tuesday that it has teamed up with the National Geospatial-Intelligence Agency to award a number of blanket purchase agreements (BPAs) for “earth observation” products, services and tools.

The awards will fall under the earth observation special item number (SIN) on GSA’s IT Schedule 70 governmentwide contract. SINs are specialized subcategories within GSA’s acquisition schedules that make it easier for buyers and sellers to navigate the often broad contracts.

Awards have been made to:

- Carahsoft Technology Corp.

- Digitalglobe Intelligence Solutions, Inc.

- General Dynamics Information Technology, Inc.

- Geographic Services Inc.

- Harris Corporation

- Hexagon US Federal, Inc.

- Leidos, Inc.

- MDA Information Systems LLC

- Observera, Inc.

- Sanborn Map Company, and

- Wiser Imagery Services LLC.

These selected companies are now part of GSA’s “one-stop-shop” for products or services in the geospatial intelligence space and will be able to sell to agencies across the federal government. Collectively, they will provide things like sensor data, advanced analytics, machine learning, predictive analysis and more to customer agencies.

Using a BPA, instead of another contract vehicle, simplifies and speeds up the acquisition process for agencies. Per GSA, the government has a “growing need” for earth observation technologies like satellite imagery, communication, analytics and more, and thus a growing need to have easy access to this kind of tech.

“The commercial earth observation industry has experienced accelerated growth and we’re very pleased to position our offerings to provide the latest in emerging technology and solutions while making it easier for our government customers to reach these companies,” Bill Zielinski, GSA’s acting assistant commissioner for the Office of Information Technology, said in a statement.

The earth observation SIN, organized under IT Schedule 70, was created in partnership with NGA in summer 2017. It’s a key part of NGA’s Commercial Initiative to Buy Operationally Responsive GEOINT, an initiative with the truly fantastic acronym CIBORG.

Membership is set for the Committee for the Modernization of Congress

The newly created Select Committee for the Modernization of Congress is ready to begin its work as House Minority Leader Kevin McCarthy, R-Calif., has announced his picks for the Republican side of the panel.

Rep. Tom Graves, R-Ga., will fill the role of vice chairman, McCarthy announced Friday, and he’ll be joined by Rob Woodall, R-Ga., Susan Brooks, R-Ind., Rodney Davis, R-Ill., Dan Newhouse, R-Wash., and William Timmons, R-S.C.

“This committee cuts to the core of what the House of Representatives strives for every day: a direct conversation with the American people in an effort to solve problems and make our country and communities better,” McCarthy said in a statement. Technology has unquestionably improved House productivity, but we must aspire to do better when it comes to connecting with and serving the American people. I couldn’t think of a better member of our conference than Tom to lead this institution into a new era of effective, efficient, and accountable governance.”

McCarthy’s appointments round out the full capacity of the select committee, which was created in early January as part of the House rules package for the new Congress. Speaker Nancy Pelosi, D-Calif., made her selections on Jan. 29 — naming Derek Kilmer, D-Wash., the chairman. Pelosi’s other selections were Emanuel Cleaver, D-Mo., Suzan DelBene, D-Wash., Zoe Lofgren, D-Calif., Mark Pocan, D-Wis., and Mary Gay Scanlon, D-Pa.

“With Congressman Kilmer at the head of the table, this Select Committee will strengthen and reinvigorate our institution, advancing a House of Representatives that is diverse, dynamic, oriented toward the future and committed to delivering results For The People,” Pelosi said in a statement when making her appointments.

The committee’s modernization mandate ranges from staff diversity and HR issues, to tech infrastructure updates and beyond. What it will choose to focus on remains to be seen, but advocates from both sides of the political spectrum say there’s a huge opportunity for the legislative branch to give its IT some needed attention.

TMF Board awards $20.7M to accelerate GSA’s NewPay shared service

In its third round of funding, the Tech Modernization Fund Board has issued a single $20.7 million award to the General Services Administration to accelerate development of its modernized payroll shared service, NewPay.

This is now the seventh modernization project to receive TMF funding, bringing the grand total awarded to $90 million of the $100 million appropriated for the fund in fiscal 2018.

NewPay is a cloud-based payroll and personnel system to be used governmentwide as a shared service. GSA announced the program in 2018 and issued a $2.5 billion blanket purchase agreement to a pair of teams providing different solutions: The first team consists of Carahsoft Technology Corporation, Immix Technology and Deloitte Consulting LLP, and will provide Kronos and SAP solutions; and the second team, led by Grant Thornton with The Arcanum Group, Inc. and CGI Federal, will provide services from Infor.

According to the project’s description on the TMF website: “Without this funding, GSA would need to delay establishing the cloud-enabled SaaS solution until a future year when dedicated upfront funding could be secured from the current user base or other sources instead of paying for the investment over a period of years. However, with the support from the TMF the project can be conducted in FY 2019 as a single effort to stand up the initial payroll and [work schedule and leave management] capabilities with current customers, with completion in two years.”

TMF Board Chairwoman and Federal CIO Suzette Kent touted NewPay’s function as a shared service as a reason for its selection.

“As the last proposal accepted in 2018, the Board believes the NewPay proposal is a critical step forward to transform an antiquated technical and operational process,” Kent said. “The TMF was created to invest in modern commercial solutions and drive faster adoption of shared services. We are pleased to support a project that will accelerate the journey to make available modern payroll services for all agencies and drive efficiency across government.”

In a release, OMB says it the TMF Board “continues to accept and evaluate high impact, high return on investment projects from agencies.”

However, the program faces a critical juncture. With this latest award, only $10 million remains to fund any additional rounds of projects.

Meanwhile, Congress hasn’t yet finalized TMF appropriations for fiscal 2019, as the bill that funds the initiative is held up in the ongoing impasse on Capitol Hill centered around securing the U.S.’s southern border.

But even before the political feud that led to a 35-day shutdown, and now a continuing resolution that expires later this week, the TMF was at risk of being zeroed out in 2019.

There is hope though. One of the latest House appropriations bills, which was never passed, would have appropriated $25 million to the TMF.

Rep. Will Hurd, R-Texas, who authored the Modernizing Government Technology Act, which gave life to the TMF, said last year he is confident Congress will fund the program in 2019.

GSA’s NewPay is the largest TMF award yet. The board issued a total of $45 million to modernization projects in June 2018 at the departments of Agriculture, Energy and Housing and Urban Development in its first round of awards. Then, last October, it awarded $23.5 million to projects at the Department of Labor, Department of Agriculture and the General Services Administration.

Veterans to get iPhone health record access this summer

The Department of Veterans Affairs plans to release a new capability this summer that will allow veterans who receive care from the VA to access their health records on Apple iPhones.

Using the iPhone’s Health app, veterans will be able to “see an aggregated view of their allergies, conditions, immunizations, lab results, medications, procedures and vitals,” according to the VA. And because the app’s Health Records feature works with other private health care providers, it will allow veterans to bring together their data from multiple providers, including the VA, in one place.

“When patients have better access to their health information, they have more productive conversations with their physicians,” said Jeff Williams, Apple’s COO. “By bringing Health Records on iPhone to VA patients, we hope veterans will experience improved healthcare that will enhance their lives.”

Within 24 hours of visiting a VA facility, veterans will be able to access their updated health information in Apple’s Health app. The department says it expects to expand access to other platforms down the line.

This capability is driven by the VA’s development of the Veterans Health API, announced in December.

“Our Health API represents the next stage in the evolution of VA’s patient data access capability,” VA Secretary Robert Wilkie said in a statement. “By building upon the Veterans Health API, we’re raising the bar in collaborating with private sector organizations to create and deploy innovative digital products for Veterans. Veterans should be able to access their health data at any time, and I’m proud of how far we’ve come to accomplishing this.”

VA is touting the iPhone function as an evolution of veterans’ ability to access their health records more easily, beginning with VA Blue Button in 2010.

President Trump signs executive order on national AI strategy

President Donald Trump signed an executive order Monday revealing his administration’s cohesive plan for American leadership in the development of artificial intelligence.

The “American AI Initiative” lays out a “multi-pronged approach” to the challenge, focused around five pillars. First, the order directs federal agencies to prioritize AI when crafting research and development budgets. It also prompts agencies to make data and computing resources available to AI researchers outside the government, asks them to set up fellowship programs and trainings to help workers gain AI-relevant tech skills, and commits to international collaboration on AI with America’s allies.

Finally, the order calls for the development of an AI governance framework. “As AI moves from the research laboratory to commercialization, regulatory guidance must also be updated to promote innovation, while also building public trust in AI,” a senior administration official said in a press call. The White House Office of Science and Technology Policy, the Domestic Policy Council and the National Economic Council, together with regulatory agencies, are tasked with creating this guidance.

“In last week’s State of the Union address, President Trump committed to investing in cutting-edge Industries of the Future,” OSTP Deputy CTO Michael Kratsios said in a statement. “The American AI Initiative follows up on that promise with decisive action to ensure AI is developed and applied for the benefit of the American people. With a strong focus on prioritizing R&D investment, removing barriers to innovation, and preparing America’s workforce for jobs of today and tomorrow, the Initiative helps strengthen American leadership while remaining true to our Nation’s values and priorities.”

Various parts of the executive order aren’t totally new. President Trump already directed agencies to focus on AI R&D through his 2020 budget priorities memo. The interest in developing a governance strategy that doesn’t stifle innovation hearkens back to basically every speech Kratsios has given during his tenure. And there are also a bunch of different AI initiatives already taking place across the federal government, from the Department of Defense’s Joint AI Center to the Defense Advanced Research Project Agency’s $2 billion AI Next campaign and more.

But the document itself is new for the U.S., which has been lagging behind peer nations, allied and otherwise, in developing and publicizing a cohesive national AI strategy. In recent months various groups have argued that America needs such a document — most recently, in December 2018, the Center for Data Innovation released a report on the matter.

“Absent an AI strategy tailored to the United States’ political economy, U.S. firms developing AI will lose their advantage in global markets and U.S. organizations will adopt AI at a less-than-optimal pace,” the center argued at the time.